Blog

Mar 11, 2026

Home Health Claim Denials Are Not Billing Problems

Arvind Sarin

I talk to home health agency owners every week. And when the conversation turns to denials, I hear the same story with remarkable consistency.

The billing team submits the claim. The denial comes back. The team works through the appeal. Sometimes they win, sometimes they do not. They move on. The next month, the same denials come back for the same reasons. The same appeal process runs again. Everyone is exhausted, the revenue cycle is slow, and nobody has time to fix what is actually causing the problem because they are too busy fighting the symptoms of it.

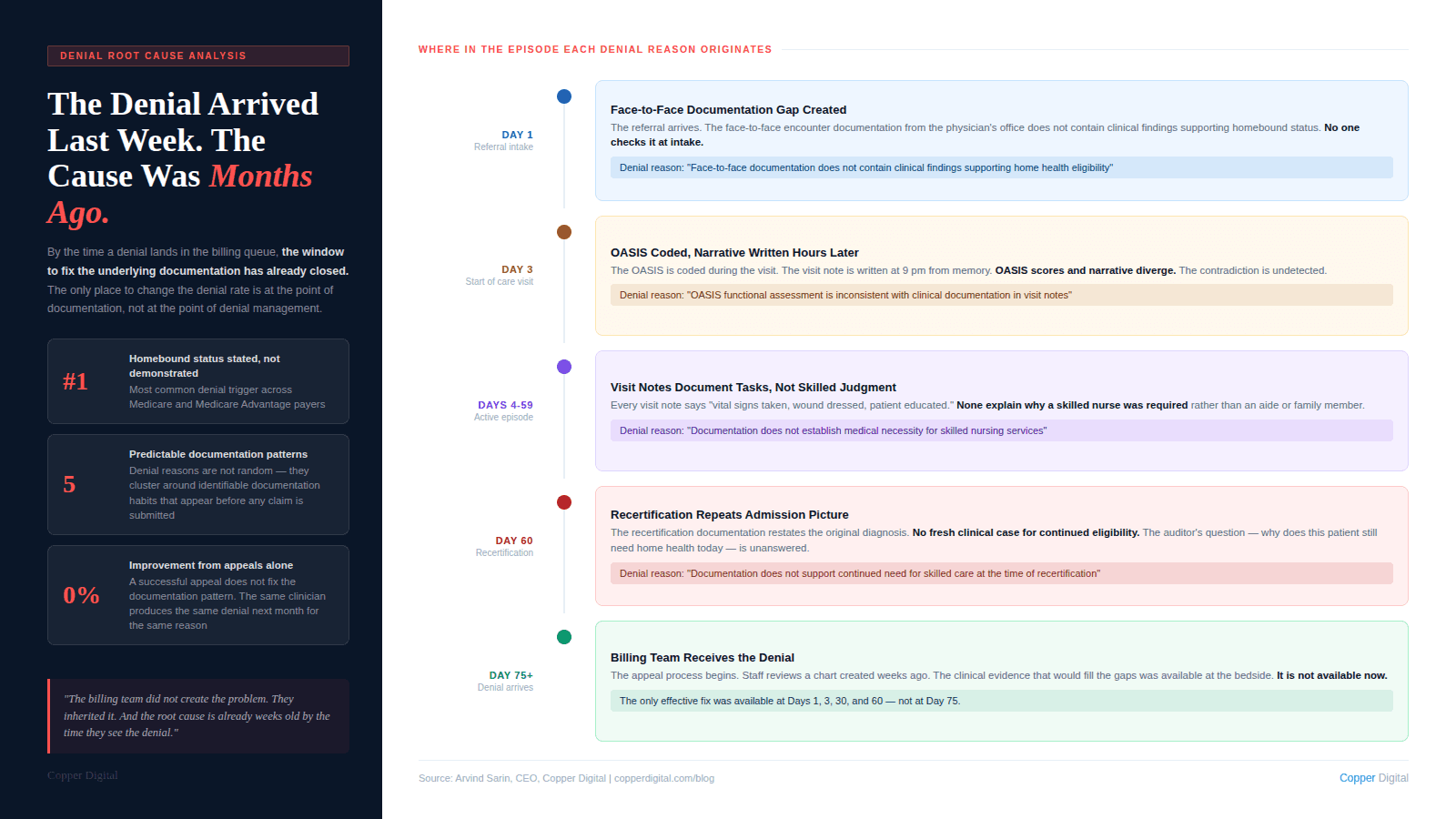

What strikes me every time is how predictable the denial reasons are. They are not random. They are not the result of a payer looking for an excuse to not pay. They are the result of specific, identifiable documentation patterns that appear in the chart before the claim is ever submitted. The billing team did not create the problem. They inherited it. And by the time the denial arrives, the window to fix the underlying documentation has already closed.

I want to walk through how I think about this problem, because I believe the mental model most agencies use for denials is the wrong one. And the wrong model produces the wrong solution. The audit-side version of this problem is covered in detail in our post on ADR and TPE audit defense. This post is about the broader denial picture, including the patterns I see most often and where the actual fix lives.

Why the Standard Denial Management Model Does Not Work

The standard model for denial management in home health looks like this: claims are submitted, denials come back, a denial management team or billing staff works the denial queue, appeals are filed, outcomes are tracked, and the cycle repeats.

This model is built around recovery. It assumes denials will happen and builds a process for responding to them. That is not unreasonable. But it misses the more important question: why are these specific claims being denied, and what would have to be different in the clinical documentation for them to have been paid on first submission?

When I look at denial data from home health agencies, the answer is almost always the same. The denial is not about what happened during the visit. It is about what was or was not written down before, during, and after the visit. The care was appropriate. The patient needed the service. The clinician delivered it. But the documentation could not prove it to an external reviewer.

By the time a denial arrives, you are managing the consequences of documentation decisions that were made weeks or months ago. The only place to fix the denial rate is upstream, in the documentation workflow, not downstream in the appeals queue. |

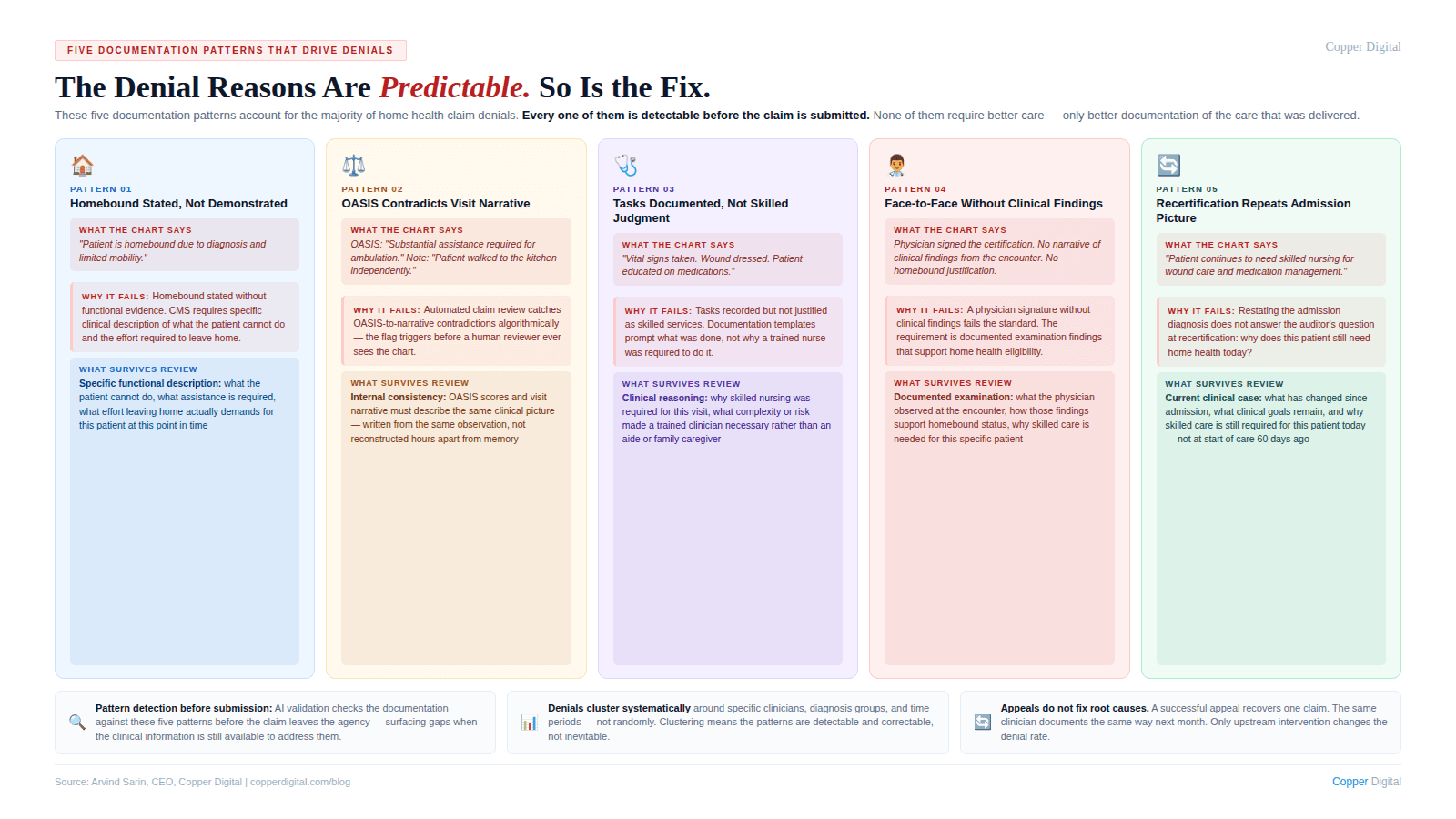

The Five Documentation Patterns That Generate the Most Denials

Across my conversations with agency owners and the clinical experts we work with at Copper Digital, including Kathy Duckett, who has been coaching home health clinicians for over 30 years, these are the documentation patterns I see driving the highest denial volumes.

1. Homebound status stated but not demonstrated

This is the single most common denial trigger I hear about. The chart says the patient is homebound. The OASIS has the right homebound qualifier checked. But nowhere in the clinical record is there a description of what, specifically, makes leaving home a considerable and taxing effort for this particular patient. The clinical reality was there. The clinician saw it. But the documentation does not describe it. To a reviewer who was not in that home, the chart says homebound without showing it. We wrote about the specific language patterns that hold up under review in Homebound Status Documentation: What It Must Say and What It Must Not Leave Out.

2. OASIS scores that contradict the visit narrative

This one is almost entirely a timing problem. The OASIS gets coded during or right after the visit. The visit note gets written hours later from memory. By then, the specific clinical details that would make the OASIS score and the narrative consistent have degraded. The result is a chart where the OASIS says one thing and the visit note implies another. Automated claim review systems catch these contradictions algorithmically. The claim gets flagged before a human reviewer ever sees it. This is exactly the documentation timing problem that Kathy Duckett described from a nursing perspective and that I think about from a systems design perspective: you cannot fix a capture problem with a review process.

3. Skilled care necessity documented as tasks, not clinical judgment

I see this pattern constantly. The visit note says: vital signs taken, wound dressed, patient educated on medications. Those are tasks. What they do not say is why a skilled nurse was required to perform them, what clinical complexity made each of those tasks a skilled nursing service rather than something a home health aide or a trained family member could have done.

From a technology standpoint, this is a structured information problem. The clinician has the clinical judgment. It happened in the room. But the documentation template does not prompt her to explain why skilled care was required. It prompts her to check boxes for what was done. Boxes for tasks, no field for clinical reasoning. The documentation system is producing the wrong output.

4. Face-to-face documentation that does not contain clinical findings

I have talked to a lot of agency owners who were surprised when face-to-face documentation became a significant denial trigger for them. The encounter happened. The physician signed the certification. But the face-to-face note does not contain the clinical findings from the examination that would support homebound status and skilled care necessity. The requirement is not just that an encounter occurred. It is that the encounter produced documentation of clinical findings that justify home health eligibility. The full breakdown of what that documentation must contain is in our face-to-face documentation post.

5. Recertification documentation that does not make a fresh clinical case

Recertification denials are particularly frustrating because the care has been going on for 60 days. Everything was fine. And then at recertification, the documentation repeats the original clinical picture without establishing why the patient still meets eligibility criteria at this point in the episode. The auditor's question at recertification is specific: why does this patient still need home health today? The documentation has to answer that question with clinical evidence from the current period, not a restatement of the admission diagnosis. This is the documentation gap I described in our recertification documentation post.

What the Data Actually Shows About Denial Patterns

When I work with agencies to look at their denial data, a consistent pattern emerges. The denials are not evenly distributed across the patient population. They cluster.

They cluster around specific diagnosis groups where documentation requirements are more complex. They cluster around specific clinicians who have documentation habits that consistently produce the patterns I described above. They cluster around specific time periods, typically end-of-quarter when caseloads are highest and documentation time is most compressed. And they cluster around specific payers who apply more rigorous review criteria.

This clustering is important because it tells you something about causation. If denials were random billing errors, they would be evenly distributed. The fact that they cluster tells you they are systematic, driven by identifiable patterns in the documentation workflow that affect some clinicians, some diagnosis types, and some time periods more than others.

From a technology standpoint, this is useful information. Patterns that are systematic are patterns that can be detected, flagged, and corrected. The question is where in the workflow you intervene: before the claim is submitted, or after the denial arrives.

Denial patterns are not random. They cluster around specific clinicians, diagnosis groups, and time periods. That clustering is evidence of systematic documentation patterns that can be detected and corrected before the claim is submitted, not just after the denial arrives. |

The Feedback Loop Problem: Why Agencies Stay Stuck

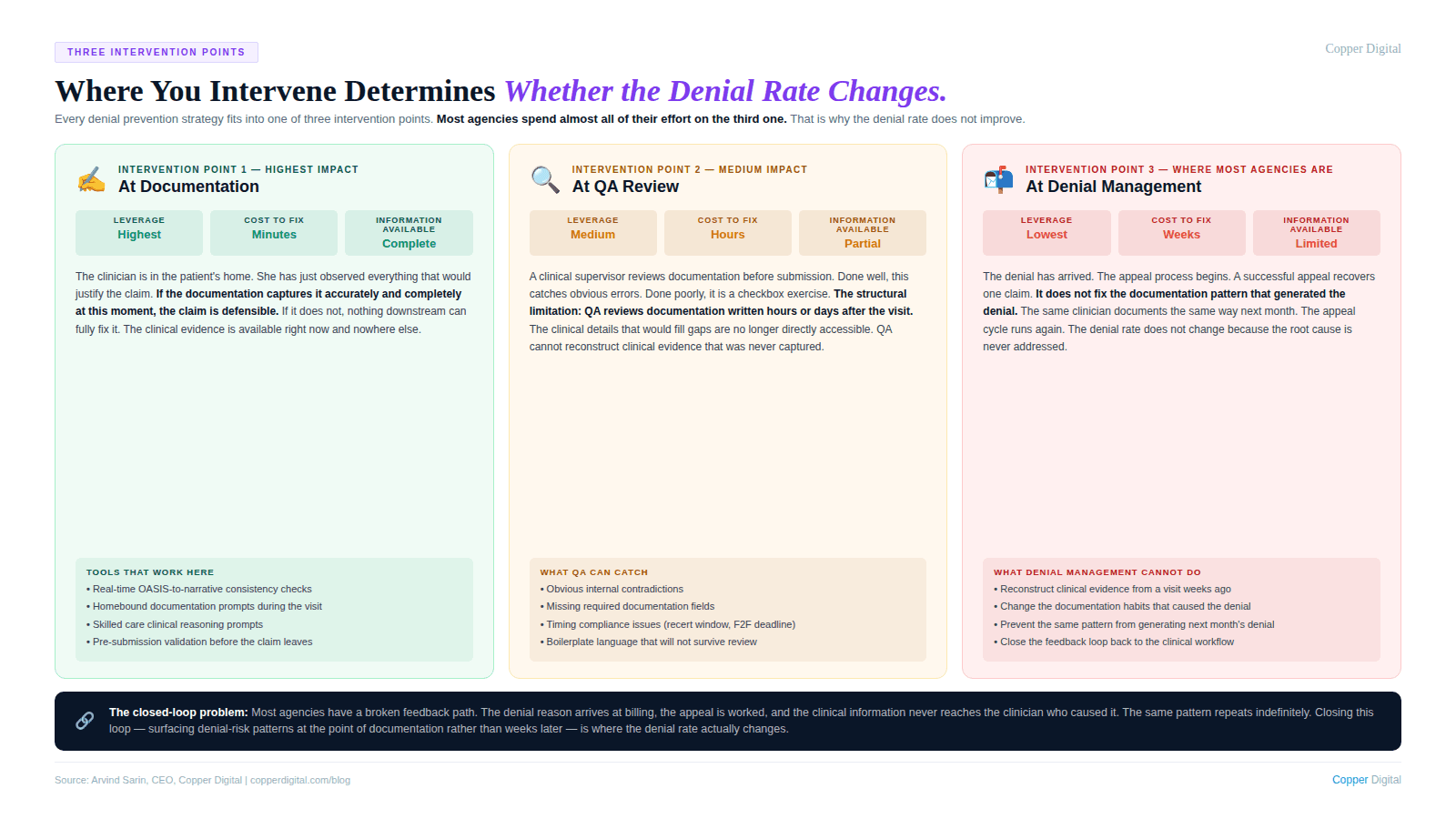

Here is something I find genuinely interesting about the denial management problem from a systems perspective. The feedback loop is broken.

When a claim is denied, the information about why it was denied arrives at the billing team. The billing team works the appeal and moves on. The clinical information, the specific documentation gap that triggered the denial, rarely makes it back to the clinician who documented the visit. It almost never makes it back in a way that changes how that clinician documents the next patient.

So the clinician with the documentation habit that is generating denials continues documenting the same way. The billing team continues fighting the same denials. The appeal win rate varies but the underlying denial rate does not change. The agency is spending money on denial management and appeal labor that is not reducing the problem, only managing it.

This is a closed-loop control system with a broken feedback path. In engineering terms, you have an output (denials) that is out of spec, but the signal never gets back to the input (documentation) where the correction needs to happen. The system cannot self-correct without that feedback path.

AI-assisted documentation can close this loop in a specific way: by providing real-time feedback to the clinician at the point of documentation, before the claim is submitted, rather than weeks later through a billing denial. The system checks the documentation against the criteria that drive denial decisions. If the homebound justification is missing, the flag appears before submission. If the OASIS score and the visit narrative are inconsistent, the flag appears before submission. The correction happens when the clinical information is still available and when the cost of correction is a minute of documentation time rather than a full appeals cycle.

The Three Places You Can Intervene in the Denial Cycle

Every denial prevention strategy fits into one of three intervention points. Most agencies are spending almost all of their effort on the third one.

Intervention point 1: At documentation (highest leverage, lowest cost)

This is where the clinical information is captured. The clinician is in the patient's home. She has just observed everything that would justify the claim. If the documentation captures it accurately and completely at this moment, the claim is defensible. If it does not, nothing downstream can fully fix it.

The tools that support this intervention point are: AI-assisted documentation that prompts for the specific clinical details that reviewers look for, real-time contradiction detection between OASIS items and narrative documentation, and pre-submission validation checks that surface missing elements before the claim leaves the agency.

Intervention point 2: At QA review (medium leverage, medium cost)

This is where a clinical supervisor or QA coordinator reviews documentation before submission. Done well, this catches documentation gaps that the clinician missed. Done poorly, it is a checkbox exercise that misses the same gaps the clinician missed.

The limitation of QA review as a denial prevention strategy is structural. QA is reviewing documentation that was created hours or days after the visit. The clinical picture that would fill the gaps is no longer directly accessible. QA can catch obvious errors and inconsistencies. It cannot reconstruct clinical evidence that was never captured.

Intervention point 3: At denial management (lowest leverage, highest cost)

This is where most agencies concentrate their effort. The denial has arrived. The appeal process begins. A clinical staff member or denial management specialist reviews the chart, constructs the strongest possible appeal argument, and submits it.

The appeal may succeed. But even a successful appeal does not fix the documentation pattern that generated the denial. The same pattern will produce the same denial next month, for the same clinician, for a similar patient. The cost of the appeal labor, the delay in payment, and the administrative burden repeat.

I am not suggesting agencies should stop doing denial management. Active denial management is necessary and revenue that would otherwise be lost is recovered through it. But if it is the primary strategy, the denial rate does not improve because the root cause is never addressed.

What AI-Assisted Documentation Actually Changes in the Denial Equation

Let me be specific about what I think technology can and cannot do here, because I see a lot of vague claims about AI reducing denials and I want to be precise.

AI cannot make a clinician a better clinician. It cannot replace the observation and judgment that happen during the visit. It cannot conjure clinical evidence that was not observed.

What AI can do is structural. It can check whether the documentation that was produced from the visit contains the elements that payers and reviewers require to approve the claim. It can do this check consistently, for every patient, on every claim, before submission. It can surface the gaps while there is still time to address them.

Specific capabilities that reduce denial exposure

Homebound documentation prompts. When the clinician completes the homebound status section, an AI prompt checks whether the documentation describes specific functional limitations and the effort required to leave home, or whether it only states that the patient is homebound. The former passes review. The latter generates denials.

OASIS-to-narrative consistency checks. Before submission, the system compares OASIS functional scores with the clinical narrative in visit notes. A patient coded as requiring substantial assistance with ambulation but described as walking independently in the narrative is a contradiction that will generate a denial. The flag appears before submission, when it can be corrected in minutes.

Skilled care documentation prompts. When a visit note documents tasks without clinical rationale, the system prompts the clinician to describe the skilled judgment involved. Wound care performed is a task. Wound assessed for signs of dehiscence and infection with findings documented and physician contacted for order change is a skilled nursing service.

Face-to-face content validation. At intake, the system checks whether the face-to-face documentation contains clinical findings that support homebound status and skilled care necessity. If it contains only a signature and a diagnosis, the system flags it for follow-up with the referring physician before the episode begins.

Recertification continued-need check. Before a recertification is submitted, the system checks whether the documentation makes a current clinical case for continued eligibility or whether it repeats the original admission picture. The difference between these two patterns is the difference between a paid recertification claim and a denial.

A Denial Root Cause Analysis Framework

If you want to understand where your denials are actually coming from, the analysis is not complicated. It requires looking at your denial data differently than most billing teams do.

Step 1: Categorize by denial reason, not by payer

Most denial reports are organized by payer. But the clinical root cause of a denial is the same regardless of which payer rejected the claim. A homebound documentation failure generates a denial from Medicare, from a Medicare Advantage plan, and from a commercial payer. Organizing by denial reason rather than payer shows you the documentation patterns that are driving your denial volume.

Step 2: Trace each denial reason back to the documentation decision that caused it

For each denial reason category, identify what specific documentation element was missing or insufficient. Homebound denials trace to visit notes and OASIS documentation. Skilled care denials trace to visit note narrative. Face-to-face denials trace to the referring physician's encounter documentation. Recertification denials trace to the recertification OASIS and continued-need statement.

Step 3: Identify whether the root cause is a knowledge problem or a workflow problem

Some documentation failures happen because the clinician does not know what the documentation should contain. Those are training and education problems. Many more documentation failures happen because the clinician knows what should be documented but does not have the time, the tools, or the workflow support to produce it consistently. Those are systems problems. Training solves knowledge problems. Technology solves workflow problems. Most agencies apply training to both categories and wonder why the denial rate does not improve.

Step 4: Intervene at the right point in the workflow

Once you know which documentation patterns are driving your denials and whether they are knowledge problems or workflow problems, you can design the right intervention. For workflow problems, the intervention needs to be at the point of documentation, not at the point of denial management. A QA checklist that runs three days after the visit is better than nothing. An AI prompt that runs during the visit is better than a QA checklist.

Copper Digital's AI documentation tools run pre-submission checks on every claim: homebound documentation completeness, OASIS-to-narrative consistency, skilled care clinical rationale, and face-to-face content validation. Request a demo to see how this changes the denial picture for your agency. |

About the Author

Arvind Sarin is the founder and CEO of Copper Digital, an AI documentation platform for home health agencies. He built Copper Digital after learning firsthand how documentation failures drain revenue from agencies that are delivering excellent care. He speaks with agency owners, DONs, and revenue cycle leaders every week and writes about the patterns he sees at the intersection of clinical operations and technology.

Related Reading

Why Home Health Agencies Fail ADR and TPE Audits: It Is Not the Care. It Is the Documentation.

Homebound Status Documentation: What It Must Say and What It Must Not Leave Out

Face-to-Face Documentation in Home Health: What CMS Actually Needs to See

Home Health Recertification Documentation: Why Renewal Is Not Enough