Blog

Apr 6, 2026

The Power of a Podcast

Rosa Hart

There is a question I get a lot when I tell people I started a podcast: Isn't that a strange thing for a nurse to do? And I understand the instinct. Nursing is a hands-on profession. It is bedside, phone calls, and showing up. The idea that a nurse would build something digital, something scalable, something that could reach thousands of people she will never meet, does not match the picture people have of what nurses do.

But here is what I have found after years as a neuro ICU nurse and then working in stroke follow-up care: I kept having the same conversations. The same excellent conversations, answering the same excellent questions, with the same patience and depth, for patients who never got to hear the answers their fellow patients got. I was limited to whoever I could get on the phone with. And there were so many people I could never reach.

The podcast changed that. Not by replacing the conversation, but by recording it and sending it everywhere there is internet. And what that experience taught me is that technology, used correctly, does not dilute nursing. It scales it.

What Actually Happens When a Stroke Patient Goes Home

I want to start here because I think most people outside the field, and some inside it, do not fully appreciate what stroke patients and their families are navigating after discharge.

In Kentucky, where I practice, we have significant literacy limitations in some areas. We have rural communities where getting to a specialist means driving hours or staying overnight in a hotel. We have patients who are uninsured, or whose insurance does not match any nearby providers who will take them. We have patients who are deconditioned, isolated, anxious, and genuinely afraid to sleep because they are terrified they will wake up, and half their body will not work again.

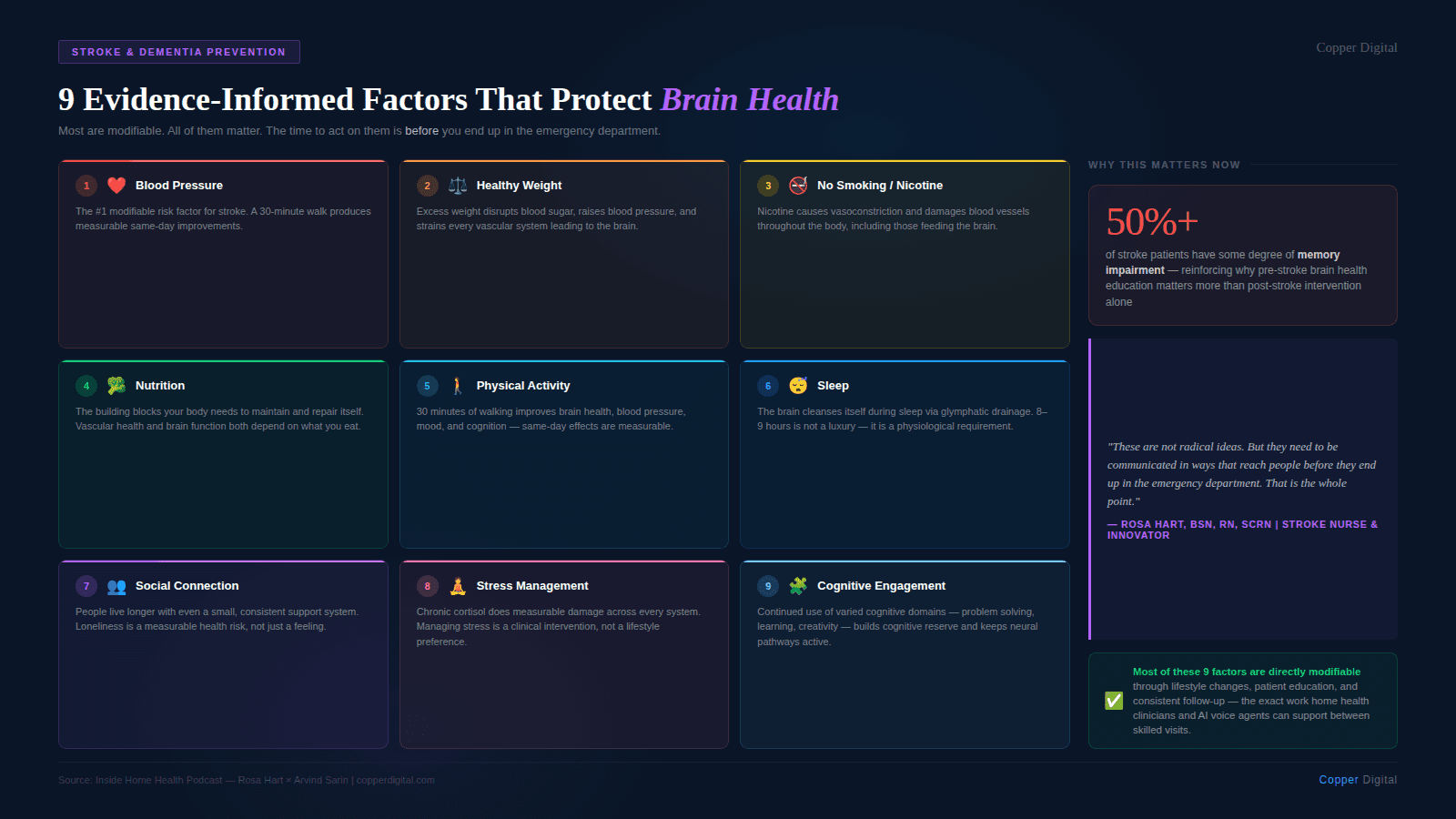

The standard of care says you have to provide written discharge instructions before patients leave the hospital. A lot of those instructions end up in the trash can. Some patients cannot read well. Others simply do not process information the same way after a stroke. More than half of the people who experience a stroke have some degree of memory impairment. And when you educate someone without a brain injury, you can realistically expect about ten percent retention. When you are educating someone who has had a stroke, and then asking them to retain that education for weeks or months without reinforcement, the math is not in anyone's favor.

I am a talker. I learn through interaction, through question and answer, through teaching back. And the patients I connect with most are the same way. Handing someone a piece of paper was never going to be enough.

If you educate someone without a brain injury, you can expect about 10% retention. To reach 100%, you need nine follow-up conversations. For stroke patients, who already have memory challenges, that repetition is not optional. It is the whole point. |

The Retention Problem and What AI Can Actually Solve

Here is something I shared with Arvind Sarin when we talked, and it stopped him in his tracks. Even if your home health nurse had unlimited time and gave a patient one hundred percent of the education she was supposed to give, that patient would still need nine follow-up contacts to reach full retention. That is the math of human memory. You lose about ninety percent after the first exposure, and you get it back in increments through repetition.

That means the question for home health is not whether patients need follow-up contact between skilled visits. They do. The question is who provides it and what form it takes.

I have been doing voice-based education and follow-up care for years. I have spent time designing and working with AI agents and thinking hard about where they can and cannot do useful work in patient education. And my honest assessment is this: for the repetitive, patient follow-up contact that nobody currently has the staffing to provide, AI agents have genuine value. Not to replace the skilled nursing visit. Not to replace the clinical judgment that happens in the room. But to make the ninth phone call when there are only resources to make the first one.

What a good AI follow-up call actually needs

I care a lot about this because I have seen bad versions of it. A good AI follow-up call for a stroke patient or any complex post-acute patient needs a few things. It needs to be patient, genuinely unhurried, without the time pressure of a call center metric. It needs to be empathetic in the actual interaction, not just scripted empathy, but responsiveness to what the patient is saying. And it needs to reference what happened in the last conversation, not start from scratch every time.

When Arvind described how Copper Digital's voice agents are built, with a layered context architecture that includes the discharge paperwork, the care plan, the nursing notes from previous visits, and the most recent conversation, that is the right direction. The patient who calls back should not have to re-explain their situation. The agent should already know. That kind of continuity is something that, frankly, human nurses calling from a case management queue often cannot provide either, not because they do not care, but because they are managing hundreds of patients, and the notes do not always transfer.

Loneliness is a clinical problem, not a social one.

I want to say something specific about stroke patients in rural or isolated settings, because I think it gets underweighted in these conversations about AI in healthcare. For someone who has not talked to anyone in three days, who is afraid to sleep, who feels like they are losing their mind because the anxiety after a stroke is real, and their family does not know how to help, an empathetic voice that has time to listen is not a poor substitute for human connection. It is something.

I had a patient who called me in a panic, just out of the hospital, afraid to sleep, feeling like she was going crazy. Her husband was trying to help but did not know what to say. I sent her an episode of my podcast on coping with anxiety and depression after a stroke. She called back on Monday and said she felt like an entirely different person. Her husband knew what to say now. They had a framework. That content, recorded once, reached her when she needed it. That is what scale looks like.

For someone who has not talked to another person in days, who is isolated and afraid after a stroke, an empathetic voice that has time and is not rushing to hang up is not a compromise. It is care. |

Where LLMs Are Getting Their Information, and Why It Matters

Here is something I think every clinician who creates any kind of educational content should understand right now. People used to Google their symptoms. Now they are asking large language models. And LLMs are drawing a significant portion of their answers from places like YouTube and Reddit, platforms that get a lot of interaction, not necessarily from the most clinically validated sources.

What that means practically is that if I put good, accurate stroke education on YouTube, the transcripts of that content feed into those models. The information I want patients to have at large becomes part of what the LLMs can draw from when they answer questions about stroke. It is not perfect, and it does not prevent hallucination. But it does mean that the experts who have been waiting for people to come to their highly credentialed websites are losing the information war to the platforms where people actually spend their time.

We have to meet people where they are. That is not a compromise of clinical standards. It is an application of them.

The Ethics Problem Nobody Has Solved Yet

I want to be direct about something, because it came up in my conversation with Arvind, and I think it deserves more attention than it usually gets. We are rolling out AI tools in healthcare at a pace that our liability and accountability frameworks have not caught up with.

I am involved with the American Board of AI and Medicine, which is a nonprofit made up of clinicians who are passionate about AI but equally passionate about protecting patients. One of the things we keep coming back to is a question of who is responsible when AI makes an error and a patient is harmed. Right now, licensed clinicians are liable. The AI is not. But those same clinicians may not have been adequately looped into the process, may not have been given time to do proper checks, and may be held responsible for something they did not meaningfully review.

I do not have the answer to that. I do not think anyone does right now. But I know that we cannot just deploy these tools and assume the liability falls on whoever is technically in the chain of care. That is not fair, and it will create a backlash from the clinical community that will slow the adoption of tools that could genuinely help patients.

What actually makes AI adoption work in clinical settings

I just came back from Vive, a major health technology conference, and I heard a phrase repeated by multiple companies: death by pilot. An AI solution gets piloted and then goes nowhere. The consistent reason is that it was built without adequate clinician input, handed to clinicians who were not trained on it, and then the developers were surprised when the clinical staff could not work with it.

This is not a mystery. If you are building a tool that clinicians are going to use, and you do not build it with clinicians, you are going to build the wrong thing. I was glad to hear that Copper Digital has a clinical advisor embedded in their product development, Kathy Duckett, whom I had not met but whose approach to advocating for nurse-centered EMR design is exactly the right instinct. You cannot build tools for nurses by going to the CEO and the board. You have to get the field nurses in the room.

The same is true for patient-facing AI. If you are building something that a stroke patient with memory impairment is going to interact with, you need to understand what that patient's cognitive reality looks like. You need to understand the literacy context. You need to test with real patients in real conditions, not with the ideal user in a conference room demo.

If I Had a Billion Dollars

Arvind asked me this question at the end of our conversation, which was a bit of a turnabout since it is a question I usually ask my guests. But I will give you the same answer I gave him.

The American Nurses Foundation did a survey of philanthropy in healthcare. About three hundred billion dollars a year is given in healthcare philanthropy. One percent of that is directed toward nursing. One penny on the dollar.

If I had a billion dollars to fix something in healthcare, I would put it toward nursing-led solutions. Not because nurses need to be centered for its own sake, but because nurses are the ones who see the downstream effects of every gap in the system. They see what happens when a patient goes home without understanding their discharge instructions. They see what happens when someone cannot afford their follow-up appointment. They see what happens when the care plan does not account for the patient's actual life. And they are consistently the last people consulted when those problems are being designed around.

Nurses who speak up about what they see, who build solutions, who create content, who go beyond the bedside and the phone call to scale their impact, are doing something the system needs. Not because they are replacing anyone. But because there are not enough of them to do it one patient at a time.

Listen to the full conversation between Rosa Hart and Arvind Sarin on the Inside Home Health podcast at our YouTube Channel. To learn how Copper Digital supports home health agencies with AI-assisted documentation, voice agents, and patient education tools, request a demo. |

Related Reading